Automation in genomics is often framed as a technical optimization, faster pipelines, better orchestration, cheaper compute, but its real ROI is determined far downstream, in clinical throughput, operational risk, and time-to-decision.

For senior leaders, however, the real question is different.

What is the business return of automating sample-to-report workflows, and when does automation become a strategic requirement rather than an engineering choice?

Across diagnostics labs, precision medicine programs, and genomics-driven healthcare organizations, sample-to-report workflows sit at the core of operational performance. They directly influence turnaround time, reproducibility, audit readiness, cloud spend, and ultimately, trust in genomic insight.

Many organizations reach a point where early success turns into operational strain. At that moment, automation is no longer about efficiency; it becomes about risk, cost predictability, and platform credibility.

This article examines the real ROI of automating sample-to-report workflows, grounded in production realities and industry benchmarks, and helps decision-makers evaluate readiness, architecture, and partners before scale makes change significantly more expensive.

Precision medicine is no longer confined to exploratory programs. Genomics workflows now underpin:

According to McKinsey, 60–70% of data and AI initiatives fail to reach sustained production, with the dominant causes being data readiness, operational fragility, and governance gaps, not analytical capability. In healthcare and life sciences, these failures surface later and cost more because regulatory and clinical dependencies delay visibility into systemic weaknesses.

Sample-to-report workflows concentrate several executive concerns:

Once genomics workflows become operationally critical, automation becomes less about speed and more about risk containment and economic control.

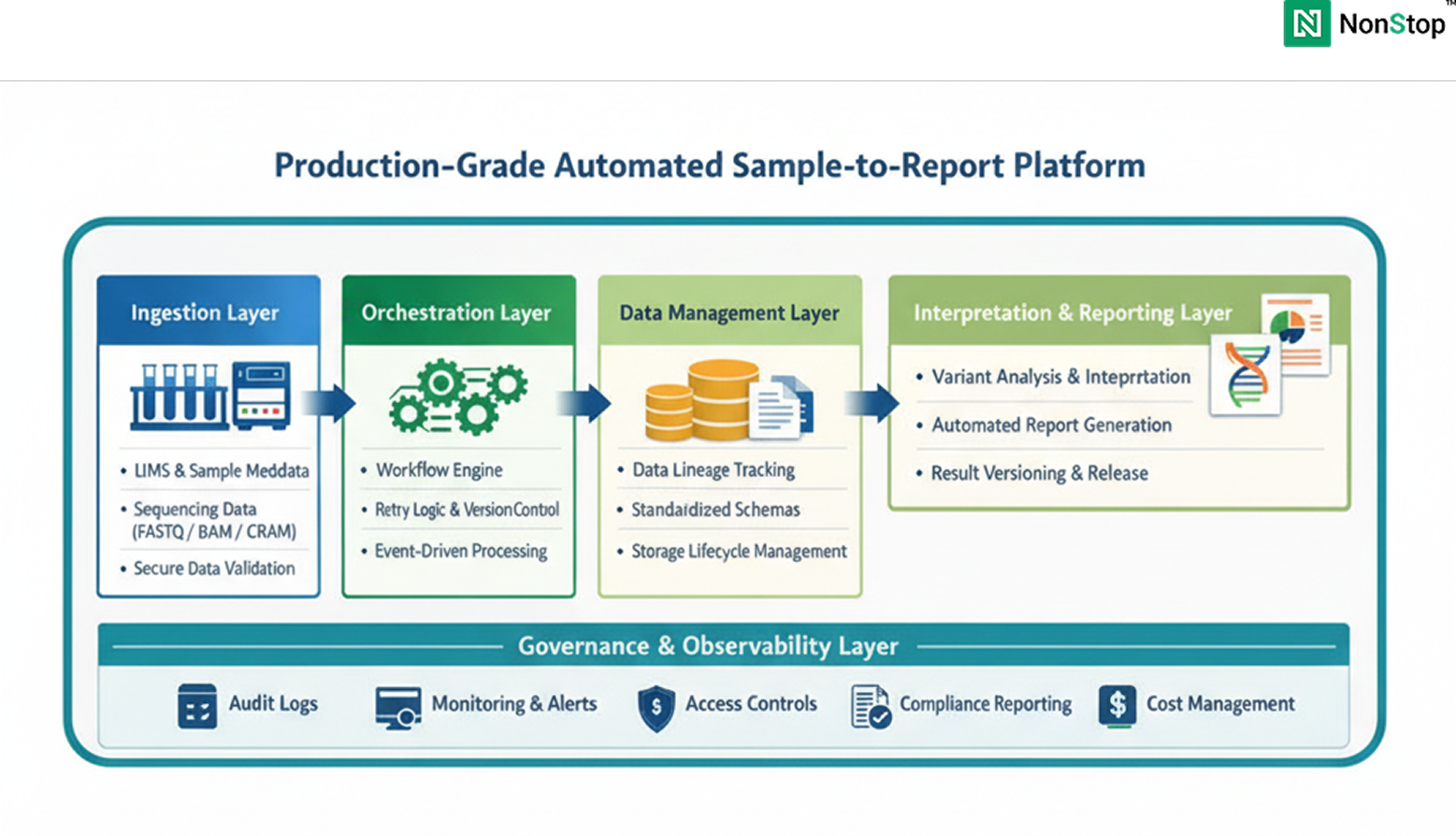

From a platform engineering perspective, sample-to-report is not a single pipeline. It is a multi-system, end-to-end workflow that typically spans:

In many organizations, these steps evolved independently. Early success is achieved through scripts, manual checkpoints, and loosely coupled systems. This approach does not fail immediately; it fails under repetition, reanalysis, and regulatory scrutiny.

Automation, in this context, is not about accelerating individual steps. It is about making the entire chain repeatable, observable, governable, and cost-predictable over time.

1. Turnaround Time Variability as a Business Risk

Organizations often measure average turnaround time. What undermines trust clinically and commercially is variance.Studies published in Nature Genetics and NEJM show that workflow variability driven by manual intervention, ad hoc reruns, and inconsistent exception handling contributes materially to delayed genomic interpretation.From a leadership standpoint, variance translates to:

Automation reduces variance by enforcing standardized execution paths and controlled failure recovery, even when raw compute performance remains unchanged.

2. Reproducibility Debt and the Cost of Reanalysis

Genomic data is not static. Clinical guidance, reference datasets, and interpretation logic evolve. The NIH has repeatedly highlighted that genomic data may be reinterpreted multiple times over a patient’s lifetime.Without automated lineage and versioning:

This creates what can be described as reproducibility debt, a liability that compounds quietly until audits, clinical questions, or regulatory reviews force costly reconstruction.

3. Compliance Overhead as a Scaling Tax

HIPAA, SOC 2, and GDPR guidance increasingly emphasize:

Manual workflows externalize compliance into documentation and human process. Automated workflows embed compliance into system behavior.According to Deloitte, compliance preparation can consume 15–25% of operational capacity in healthcare data systems that lack built-in governance. Automation shifts this burden from people to platforms.

For decision-makers, ROI manifests across four dimensions.

1. Operational Cost per Sample

McKinsey and OECD studies on healthcare automation consistently show 15–30% reductions in operational cost per unit when workflows are standardized and governed. In genomics platforms, savings typically arise from:

These savings are structural, not incremental.

2. Predictability of Time-to-Insight

Automation commonly delivers 20–40% reductions in turnaround time variability. For clinical and commercial genomics programs, predictability often matters more than raw speed, enabling capacity planning and stakeholder confidence.

3. Reduced Audit and Regulatory Risk

Automated workflows provide:

HIMSS research shows that systems with embedded traceability experience fewer audit findings and lower remediation effort. This risk reduction is difficult to quantify precisely, but highly material at scale.

4. Platform Readiness for AI and Advanced Analytics

Across industries, Gartner reports that only 20–30% of AI initiatives reach sustained production. In healthcare, failures are driven primarily by governance and reproducibility gaps.Automation is a prerequisite for AI in precision medicine, not an optimization step.

A common failure pattern is equating automation with adopting a workflow engine or scheduler. Tools are necessary but insufficient.

ROI emerges only when automation is treated as a platform capability, encompassing:

Organizations that approach automation as a tooling exercise typically re-architect later, at significantly higher cost.

A production-grade platform typically includes:

Across organizations, the same patterns recur:

Gartner estimates that retrofitting production readiness after scale can cost 3–5× more than designing for it upfront.

NonStop is not a genomics lab, diagnostics provider, or clinical decision-maker.

NonStop operates as a digital platform engineering partner, specializing in:

Organizations engage NonStop when genomics initiatives transition from pilot to production, and leadership requires:

NonStop’s role is to design and build production-ready digital platforms vendor-neutral, compliance-aware, and engineered for longevity. The focus is not on owning scientific logic, but on ensuring the systems that support it can be trusted at scale.

Nonstop partners with genomics and healthcare organizations to design and build production-grade automation platfroms, vendor-neutral, compliance - aware, and engineered for long-term operation. If automation is moving from an engineering discussion to an executive decision in your organization, Let's talk

Contact Us

Before automating, leaders should be able to answer:

If these answers are unclear, ROI will be fragile.

Automating sample-to-report workflows is not a tactical exercise in speeding up pipelines. It is a strategic platform decision that determines whether a precision medicine program can operate reliably at scale, under regulatory scrutiny, and in the face of long-term cost pressure.

When automation is designed as a platform capability embedded with governance, reproducibility, observability, and cost controls, it creates systems that leadership can stand behind. These platforms support predictable turnaround times, defensible audit outcomes, controlled cloud spend, and the ability to evolve interpretation logic without operational disruption.

Organizations that treat automation as a shortcut to infrastructure often experience short-term gains, followed by escalating rework, compliance risks, and technical debt. In contrast, those that invest early in platform-grade automation achieve durable ROI by reducing operational friction, lowering reanalysis costs, and preserving trust as genomic programs expand.

For decision-makers responsible for precision medicine platforms, the question is no longer whether automation is necessary. It is whether the automation strategy is intentional, governed, and supported by a partner capable of designing systems for long-term operation, not just initial deployment.

At this stage, automation becomes less about efficiency and more about credibility, economics, and sustainability. The organizations that recognize this distinction early are the ones best positioned to scale precision medicine with confidence.

Most organizations don’t see risk until volume, audits, or reinterpretation events expose it. Common warning signs include:

If any of these exist, automation may already be overdue rather than optional.

In production genomics platforms, failure rarely starts in compute performance. It usually begins with:

These failures compound quietly and surface only when regulatory scrutiny or clinical escalation forces reconstruction.

Manual workflows externalize compliance into documents, SOPs, and people. Automated workflows internalize compliance into system behavior.

In practice, automation enables:

This typically reduces audit preparation time and risk exposure significantly as scale increases.

It depends on how automation is designed.

Tool-driven automation often creates lock-in by embedding logic directly into vendor-specific orchestration layers. Platform-grade automation separates:

When designed correctly, automation reduces vendor dependency rather than increasing it.

Genomic reinterpretation is inevitable as reference databases, guidelines, and clinical knowledge evolve.

Automation supports this by:

Without automation, reinterpretation becomes expensive, risky, and operationally disruptive.

1. Automation may be premature when:

2. Automation becomes unavoidable when:

The transition point is often earlier than teams expect.

Automation succeeds when organizations can sustain:

Without these, automation delivers short-term gains but fragile long-term ROI.

If your sample-to-report workflows are becoming operationally critical, a structured platform assessment can clarify where automation will deliver durable ROI and where hidden risks may already exists.

Talk to NonStop about evaluating your currrent workflows, governance maturity, and platform readiness.

Contact Us